糗事百科实例:

爬取糗事百科段子,假设页面的URL是 http://www.qiushibaike.com/8hr/page/1

要求:

使用requests获取页面信息,用XPath / re 做数据提取

获取每个帖子里的

用户头像链接、用户姓名、段子内容、点赞次数和评论次数保存到 json 文件内

参考代码

#qiushibaike.py

#import urllib

#import re

#import chardet

import requests

from lxml import etree

page = 1

url = 'http://www.qiushibaike.com/8hr/page/' + str(page)

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.116 Safari/537.36',

'Accept-Language': 'zh-CN,zh;q=0.8'}

try:

response = requests.get(url, headers=headers)

resHtml = response.text

html = etree.HTML(resHtml)

result = html.xpath('//div[contains(@id,"qiushi_tag")]')

for site in result:

item = {}

imgUrl = site.xpath('./div/a/img/@src')[0].encode('utf-8')

username = site.xpath('./div/a/@title')[0].encode('utf-8')

#username = site.xpath('.//h2')[0].text

content = site.xpath('.//div[@class="content"]/span')[0].text.strip().encode('utf-8')

# 投票次数

vote = site.xpath('.//i')[0].text

#print site.xpath('.//*[@class="number"]')[0].text

# 评论信息

comments = site.xpath('.//i')[1].text

print imgUrl, username, content, vote, comments

except Exception, e:

print e

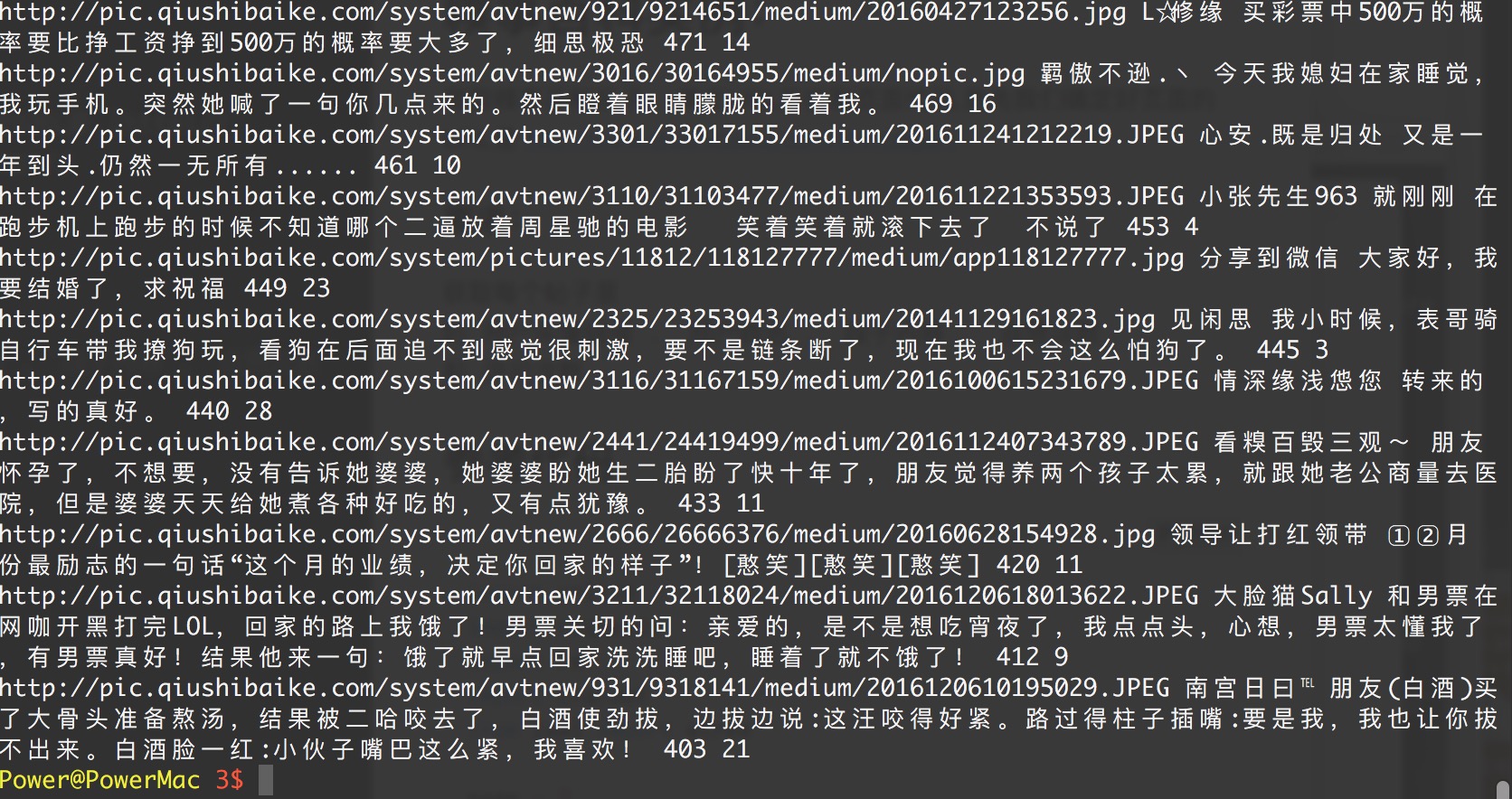

演示效果